|

How To Create And Configure Your Robots. File. Jul. 13. Posted on July 1. Kevin Muldoon in Tips & Tricks . It works in a similar way as the robots meta tag which I discussed in great length recently. The main difference being that the robots. Placing a robots. For example, you could stop a search engine from crawling your images folder or from indexing a PDF file that is located in a secret folder. Major searches will follow the rules that you set. Be aware, however, that the rules you define in your robots. Crawlers for malicious software and poor search engines might not comply with your rules and index whatever they want. Thankfully, major search engines follow the standard, including Google, Bing, Yandex, Ask, and Baidu. Is it possible in robots.txt to stop google from picking this up? If so where do I place robots.txt, in the root of the subdomain or within the /pdf/ folder? Also what do I put in robots.txt? You might be surprised to hear that one small text file, known as robots.txt, could be the downfall of your website. In this article, I would like to show you how to create a robots. Word. Press website. The Basic Rules of the Robots Exclusion Standard. A robots. txt file can be created in seconds. All you have to do is open up a text editor and save a blank file as robots. Once you have added some rules to the file, save the file and upload it to the root of your domain i. Please ensure you upload robots. Word. Press is installed in a subdirectory. I recommend file permissions of 6. Most hosting setups will set up that file with those permissions after you upload the file. You should also check out the Word. Press plugin WP Robots Txt; which allows you to modify the robots. Word. Press admin area. It will save you from having to re- upload your robots. FTP every time you modify it. Search engines will look for a robots. Please note that a separate robots. It does not take long to get a full understanding of the robots exclusion standard, as there are only a few rules to learn. These rules are usually referred to as directives. The two main directives of the standard are: User- agent . For example, you could add the following to your website robots. User- agent: *. Disallow: /The above directive is useful if you are developing a new website and do not want search engines to index your incomplete website. Some websites use the disallow directive without a forward slash to state that a website can be crawled. This allows search engines complete access to your website. Robots Txt Disallow Pdf To WordThe following code states that all search engines can crawl your website. There is no reason to enter this code on its own in a robots. However, it can be used at the end of a robots. User- agent: *. Disallow: You can see in the example below that I have specified the images folder using /images/ and not www. This is because robots. URL paths. The forward slash (/) refers to the root of a domain and therefore applies rules to your whole website. Paths are case sensitive, so be sure to use the correct case when defining files, pages, and directories. User- agent: *. Disallow: /images/. In order to define directives for specific search engines, you need to know the name of the search engine spider (aka the user agent). Googlebot- Image, for example, will define rules for the Google Images spider. User- agent: Googlebot- Image. Disallow: /images/. Please note that if you are defining specific user agents, it is important to list them at the start of your robots. You can then use User- agent: * at the end to match any user agents that were not defined explicitly. It is not always search engines that crawl your website; which is why the term user agent, robot, or bot, is frequently used instead of the term crawler. The number of internet bots that can potentially crawl your website is huge. The website Bots vs Browsers currently lists around 1.

The list contains browsers, gaming devices, operating systems, bots, and more. Bots vs Browsers is a useful reference for checking the details of a user agent that you have never heard of before. You can also reference User- Agents. User Agent String. Thankfully, you do not need to remember a long list of user agents and search engine crawlers. You just need to know the names of bots and crawlers that you want to apply specific rules to; and use the * wildcard to apply rules to all search engines for everything else. Below are some common search engine spiders that you may want to use: Bingbot – Bing. Googlebot – Google. Googlebot- Image – Google Images. Googlebot- News – Google News. Teoma – Ask. Please note that Google Analytics does not natively show search engine crawling traffic as search engine robots do not activate Javascript. However, Google Analytics can be configured to show information about the search engine robots that crawl your website. Setting Up up Robots.txt File in WordPress. Usually for WordPress websites, you do not need to add robots.txt file. Search engines index the entire WordPress websites by default. However, for better SEO, you can add robots.txt. Log file analyzers that are provided by most hosting companies, such as Webalizer and AWStats, do show information about crawlers. I recommend reviewing these stats for your website to get a better idea of how search engines are interacting with your website content. Non Standard Robots. Rules. User- agent and Disallow are supported by all crawlers, though a few more directives are available. These are known as non- standard as they are not supported by all crawlers. However, in practice, most major search engines support these directives too. Allow . However, the rule is useful in certain situations. MJ12bot User-agent: Screaming Frog SEO Spider User-agent: XoviBot Disallow: / User. Generate effective robots.txt files that help ensure Google and other search engines are crawling and indexing your site properly. Robots.txt File HTML-5.com is a great guide for web developers. For example, you can define a directive that blocks all search engines from crawling your website, but allow a specific search engine, such as Bing, to crawl. You could also use the directive to allow crawling of a particular file or directory; even if the rest of your website is blocked. User- agent: Googlebot- Image. Disallow: /images/. Allow: /images/background- images/. Allow: /images/logo. Please note that this code: User- agent: *.

Allow: /Produces the same outcome as this code: User- agent: *. Disallow: As I mentioned previously, you would never use the allow directive to advise a search engine to crawl a website as it does that by default. Interestingly, the allow directive was first mentioned in a draft of robots. Ask. com uses “Disallow: ” to allow crawling of certain directories. While Google and Bing both take advantage of the allow directive to ensure that certain areas of their websites are still crawlable.

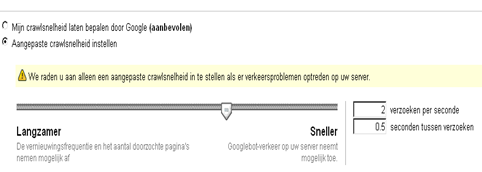

If you view their robots. As such, the allow directive should be used in conjunction with the disallow rule. User- agent: Bingbot. Disallow: /files. Allow: /files/e. Book- subscribe. Multiple directives can be defined for the same user agent. Therefore, you can expand your robots. It just depends on how specific you want to be about what search engines can and cannot do (note that there is a limit to how many lines you can add, but I will speak about this later). Defining your sitemap will help search engines locate your sitemaps quicker. This, in turn, helps them locate your website content and index it. You can use the Sitemap directive to define multiple sitemaps in your robots. Note that it is not necessary to define a user agent when you specify where your sitemaps are located. Also bear in mind that your sitemap should support the rules you specify in your robots. That is, there is no point listing pages in your sitemap for crawling if your robots. A sitemap can be placed anywhere in your sitemap. Generally, website owners list their sitemap at the beginning or near the end of the robots. Sitemap: http: //www. This allows you to dictate the number of seconds between requests on your server, for a specific user agent. User- agent: teoma. Crawl- delay: 1. 5Note that Google does not support the crawl delay directive. To change the crawl rate of Google’s spiders, you need to log in to Google Webmaster Tools and click on Site Settings. Webmaster Tools Site Settings can be selected via the cog icon. You will then be able to change the crawl delay from 5. There is no way to enter a value directly; you need to choose the crawl rate by sliding a selector. Additionally, there is no way to set different crawl rates for each Google spider. For example, you cannot define one crawl rate for Google Images and another for Google News. The rate you set is used for all Google crawlers. Unfortunately, one crawl rate is applied to all search engine crawlers. A few search engines, including Google and the Russian search engine Yandex, let you use the host directive. This allows a website with multiple mirrors to define the preferred domain. This is particularly useful for large websites that have set up mirrors to handle large bandwidth requirements due to downloads and media. I have never used the host directive on a website myself, but apparently you need to place it at the bottom of your robots. Remember to do this if you use the directive in your website robots. Host: www. mypreferredwebsite. As you can see, the rules of the robots exclusion standard are straight forward. Be aware that if the rules you set out in your robots. This is something I spoke about recently in my post “How To Stop Search Engines From Indexing Specific Posts And Pages In Word. Press“. Advanced Robots. Techniques. The larger search engines, such as Google and Bing, support the use of wildcards in robots. These are very useful for denoting files of the same type. An asterisk (*) can be used to match occurrences of a sequence. For example, the following code will blog a range of images that have logo at the beginning. User- agent: *. Disallow: /images/logo*. The code above would disallow images within the images folder such as logo. For example, Disallow: about. Disallow: about. html. You could, however, use the code below to block content in any directory that starts with the word test. This would hide directories named test, testsite, test- 1. User- agent: *. Disallow: /test*/. Wildcards are useful for stopping search engines from crawling files of a particular type or pages that have a specific prefix. For example, to stop search engines from crawling all of your PDF documents within your downloads folder, you could use this code: User- agent: *. Disallow: /downloads/*. And you could stop search engines from crawling your wp- admin, wp- includes, and wp- content directories, by using this code: User- agent: *. Disallow: /wp- */. Wildcards can be used in multiple locations in a directive.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

January 2017

Categories |

RSS Feed

RSS Feed